WITNESS RADIO MILESTONES

Exclusive: Facebook Opens Up About False News

Published

8 years agoon

NEWS FEED, THE algorithm that powers the core of Facebook, resembles a giant irrigation system for the world’s information. Working properly, it nourishes all the crops that different people like to eat. Sometimes, though, it gets diverted entirely to sugar plantations while the wheat fields and almond trees die. Or it gets polluted because Russian trolls and Macedonian teens toss in LSD tablets and dead raccoons.

For years, the workings of News Feed were rather opaque. The company as a whole was shrouded in secrecy. Little about the algorithms got explained and employees were fired for speaking out of turn to the press. Now Facebook is everywhere. Mark Zuckerberg has been testifying to the European Parliament via livestream, taking hard questionsfrom reporters, and giving tech support to the Senate. Senior executives are tweeting. The company is running ads during the NBA playoffs.

In that spirit, Facebook is today making three important announcements on false news, to which WIRED got an early and exclusive look. In addition, WIRED was able to sit down for a wide-ranging conversation with eight generally press-shy product managers and engineers who work on News Feed to ask detailed questions about the workings of the canals, dams, and rivers that they manage.

The first new announcement: Facebook will soon issue a request for proposals from academics eager to study false news on the platform. Researchers who are accepted will get data and money; the public will get, ideally, elusive answers to how much false news actually exists and how much it matters. The second announcement is the launch of a public education campaign that will utilize the top of Facebook’s homepage, perhaps the most valuable real estate on the internet. Users will be taught what false news is and how they can stop its spread. Facebook knows it is at war, and it wants to teach the populace how to join its side of the fight. The third announcement—and the one the company seems most excited about—is the release of a nearly 12-minute video called “Facing Facts,” a title that suggests both the topic and the repentant tone.

The film, which is embedded at the bottom of this post, stars the product and engineering managers who are combating false news, and was directed by Morgan Neville, who won an Academy Award for 20 Feet from Stardom. That documentary was about backup singers, and this one essentially is too. It’s a rare look at the people who run News Feed: the nerds you’ve never heard of who run perhaps the most powerful algorithm in the world. In Stardom, Neville told the story through close-up interviews and B-roll of his protagonists shaking their hips on stage. This one is told through close-up interviews and B-roll of his protagonists staring pensively at their screens.

In many ways, News Feed is Facebook: It’s an algorithm comprised of thousands of factors that determines whether you see baby pictures, white papers, shitposts, or Russian agitprop. Facebook typically guards information about the way the Army guards Fort Knox. This makes any information about it valuable, which makes the film itself valuable. And right from the start, Neville signals that he’s not going to merely scoop out a bowl of peppermint propaganda. The opening music is slightly ominous, leading into the voice of John Dickerson, of CBS News, intoning about the bogus stories that flourished on the platform during the 2016 election. Critical news headlines blare, and Facebook employees, one carrying a skateboard and one a New Yorkertote, move methodically up the stairs into headquarters.

‘Is there a silver bullet? There isn’t.’

EDUARDO ARIÑO DE LA RUBIA

The message is clear: Facebook knows it screwed up, and it wants us all to know it knows it screwed up. The company is confessing and asking for redemption. “It was a really difficult and painful thing,” intones Adam Mosseri, who ran News Feed until recently, when he moved over to run product at Instagram. “But I think the scrutiny was fundamentally a helpful thing.”

After the apology, the film moves into exposition. The product and engineering teams explain the importance of fighting false news and some of the complexities of that task. Viewers are taken on a tour of Facebook’s offices, where everyone seems to work hard and where there’s a giant mural of Alan Turing made of dominos. At least nine times during the film, different employees scratch their chins.

Oddly, the most clarifying and energizing moments in “Facing Facts” involve whiteboards. There’s a spot three and a half minutes in when Eduardo Ariño de la Rubia, a data science manager for News Feed, draws a grid with X and Y axes. He’s charismatic and friendly, and he explains that posts on Facebook can be broken into four categories, based on the intent of the author and the truthfulness of the content: innocent and false; innocent and true; devious and false; devious and true. It’s the latter category—including examples of cherry-picked statistics—that might be the most vexing.

A few minutes later, Dan Zigmond—author of the book Buddha’s Diet, incidentally—explains the triptych through which troublesome posts are countered: remove, reduce, inform. Terrible things that violate Facebook’s Terms of Service are removed. Clickbait is reduced. If a story appears fishy to fact-checkers, readers are informed. Perhaps they will be shown related stories, or more information on the publisher. It’s like a parent who doesn’t take the cigarettes away but who drops down a booklet on lung cancer and then stops taking them to the drug store. Zigmond’s whiteboard philosophy is also at the core of a Hard Questions blog post Facebook published today.

The central message of the film is that Facebook really does care profoundly about false news. The company was slow to realize the pollution building up in News Feed, but now it is committed to cleaning it up. Not only does Facebook care, it’s got young, dedicated people who are on it. They’re smart, too. John Hegeman, who now runs News Feed, helped build the Vickrey-Clark-Groves auction system for Facebook advertising, which has turned it into one of the most profitable businesses of all time.

The question for Facebook, though, is no longer whether it cares. The question is whether the problem can be solved. News Feed has been tuned, for years, to maximize our attention and in many ways our outrage. The same features that incentivized publishers to create clickbait are the ones that let false news fly. News Feed has been nourishing the sugar plantations for a decade. Can it really help grow kale, or even apples?

To try to get at this question, on Monday, I visited with the nine stars of the film, who sat around a rectangular table in a Facebook conference room and explained the complexities of their work. (A transcript of the conversation can be read here.) The company has made all sorts of announcements since December 2016 about its fight against false news. It has partnered with fact-checkers, limited the ability of false news sites to make money off their schlock, and created machine-learning systems for combatting clickbait. And so I began the interview by asking what had mattered most.

The answer, it seems, is both simple and complex. The simple part is that Facebook has found that just strictly applying its rules—”blocking and tackling,” Hegeman calls it—has knocked many purveyors of false news off the platform. The people who spread malarkey also often set up fake accounts or break basic community standards. It’s like a city police force that cracks down on the drug trade by arresting people for loitering.

In the long run, though, Facebook knows that complex machine-learning systems are the best tool. To truly stop false news, you need to find false news, and you need machines to do that because there aren’t enough humans around. And so Facebook has begun integrating systems—used by Instagram in its efforts to battle meanness—based on human-curated datasets and a machine-learning product called DeepText.

Here’s how it works. Humans, perhaps hundreds of them, go through tens or hundreds of thousands of posts identifying and classifying clickbait—”Facebook left me in a room with nine engineers and you’ll never believe what happened next.” This headline is clickbait; this one is not. Eventually, Facebook unleashes its machine-learning algorithms on the data the humans have sorted. The algorithms learn the word patterns that humans consider clickbait, and they learn to analyze the social connections of the accounts that post it. Eventually, with enough data, enough training, and enough tweaking, the machine-learning system should become as accurate as the people who trained it—and a heck of a lot faster.

In addition to identifying clickbait, the company used the system to try to identify false news. This problem is harder: For one, it’s not as simple as analyzing a simple, discrete chunk of text, like a headline. Secondly, as Tessa Lyons, a product manager helping to oversee the project, explained in our interview, truth is harder to define than clickbait. So Facebook has created a database of all the stories flagged by the fact-checking organizations that it has partnered with since late 2016. It then combines this data with other signals, including reader comments, to try to train the model. The system also looks for duplication, because, as Lyons says, “the only thing cheaper than creating fake news is copying fake news.” Facebook does not, I was told in the interview, actually read the content of the article and try to verify it. That is surely a project for another day.

Interestingly, the Facebook employees explained, all clickbait and false news is treated the same, no matter the domain. Consider these three stories that have spread on the platform in the past year.

“Morgue employee cremated by mistake while taking a nap.” “President Trump orders the execution of five turkeys pardoned by Obama.” “Trump sends in the feds— Sanctuary City Leaders Arrested.”

The first is harmless; the second involves politics, but it’s mostly harmless. (In fact it’s rather funny.) The third could scare real people and bring protesters into the streets. Facebook could, theoretically, deal with each of these kinds of false news differently. But according to the News Feed employees I spoke with, it does not. All headlines pass through the same system and are evaluated the same way. In fact, all three of these examples seem to have gotten through and started to spread.

Why doesn’t Facebook give political news strict scrutiny? In part, Lyons said, because stopping the trivial stories helps the company stop the important ones. Mosseri added that weighting different categories of misinformation differently might be something that the company considers later. “But with this type of integrity work I think it’s important to get the basics done well, make real strong progress there, and then you can become more sophisticated,” he said.

Behind all this though is the larger question. Is it better to keep adding new systems on top of the core algorithm that powers News Feed? Or might it be better to radically change News Feed?

I pushed Mosseri on this question. News Feed is based on hundreds, or perhaps thousands, of factors, and as anyone who has run a public page knows, the algorithm rewards outrage. A story titled “Donald Trump is a trainwreck on artificial intelligence,” will spread on Facebook. A story titled “Donald Trump’s administration begins to study artificial intelligence” will go nowhere. Both stories could be true, and the first headline isn’t clickbait. But it pulls on our emotions. For years, News Feed—like the tabloids—has heavily rewarded this kind of story, in part because the ranking was heavily based on simple factors that correlate with outrage and immediate emotional reactions.

Now, according to Mosseri, the algorithm is starting to take into account more serious factors that correlate with a story’s quality, not just its emotional tug. In our interview, he pointed out that the algorithm now gives less value to “lighter weight interactions like clicks and likes.” In turn, it is putting more priority on “heavier weight things like how long do we think you’re going to watch a video for? Or how long do we think you’re going to read an article for? Or how informative do you think you’d say this article is if we asked you?” News Feed, in a new world, might give more value to a well-read, informative piece about Trump and artificial intelligence, instead of just a screed.

‘Two billion people around the world are counting on us to fix this.’

DAN ZIGMOND

Perhaps the most existential question for Facebook is whether the nature of its business inexorably helps the spread of false news. Facebook makes money by selling targeted ads, which means it needs to know how to target people. It gathers as much data as it can about each of its users. This data can, in turn, be used by advertisers to find and target potential fans who will be receptive to their message. That’s useful if an advertiser like Pampers wants to sell diapers only to the parents of newborns. It’s not great if the advertiser is a fake-news purveyor who wants to find gullible people who can spread his message. In a podcast with Bloomberg, Cyrus Massoumi, who created a site called Mr. Conservative, which spread all kinds of false news during the 2016 election, explained his modus operandi. “There’s a user interface facebook.com/ads/manager and you create ads and then you create an image and advert, so lets say, for example, an image of Obama. And it will say ‘Like if you think Obama is the worst president ever.’ Or, for Trump, ‘Like if you think Trump should be impeached.’ And then you pay a price for those fans, and then you retain them.”

In response to a question about this, Ariño de la Rubia noted that the company does go after any page it suspects of publishing false news. Massoumi, for example, now says he can’t make any money from the platform. “Is there a silver bullet?” Ariño de la Rubia asked. “There isn’t. It’s adversarial, and misinformation can come from any place that humans touch and humans can touch lots of places.”

Pushed on the related question of the possibility of shutting down political Groups into which users have put themselves, Mosseri noted that it would indeed stop some of the spread of false news. But, he said, “you’re also going to reduce a whole bunch of healthy civic discourse. And now you’re really destroying more value than problems that you’re avoiding.”

Should Facebook be cheered for its efforts? Of course. Transparency is good, and the scrutiny from journalists and academics (or at least most academics) will be good. But to some close analysts of the company, it’s important to note that this is all coming a little late. “We don’t applaud Jack Daniels for putting warning labels about drinking while pregnant. And we don’t cheer GM for putting seat belts and airbags in their cars,” says Ben Scott, a senior adviser to the Open Technology Institute at the New America Foundation. “We’re glad they do, but it goes with the territory of running those kinds of businesses.”

Ultimately, the most important question for Facebook is how well all these changes work. Do the rivers and streams get clean enough that they feel safe to swim in? Facebook knows that it has removed a lot of claptrap from the platform. But what will happen in the American elections this fall? What will happen in the Mexican elections this summer?

Most importantly, what will happen as the problem gets more complex? False news is only going to get more complicated, as it moves from text to images to video to virtual reality to, one day, maybe, computer-brain interfaces. Facebook knows this, which is why the company is working so hard on the problem and talking so much. “Two billion people around the world are counting on us to fix this,” Zigmond said.

Related posts:

EU gives Facebook and Google three months to tackle extremist content

EU gives Facebook and Google three months to tackle extremist content

Tech giants should join global campaign to seek land rights for all: experts

Tech giants should join global campaign to seek land rights for all: experts

Privacy Vs Free Expression: Global News Media Implications Of The EU’S General Data Protection Regulation (GDPR)

Privacy Vs Free Expression: Global News Media Implications Of The EU’S General Data Protection Regulation (GDPR)

Land Inquiry Commission opens investigations into the eviction of natives from former government ranches in Bunyoro sub-region

Land Inquiry Commission opens investigations into the eviction of natives from former government ranches in Bunyoro sub-region

You may like

-

More African governments are trying to control what’s being said on social media and blogs

-

Uganda ‘feels’ Tanzania’s tightening grip on social media

-

Access Now calls for global independent audit of Facebook data practices

-

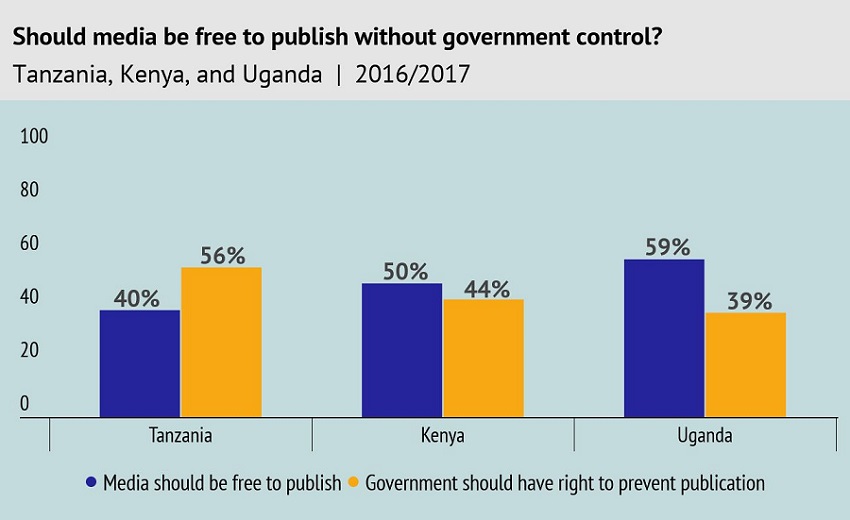

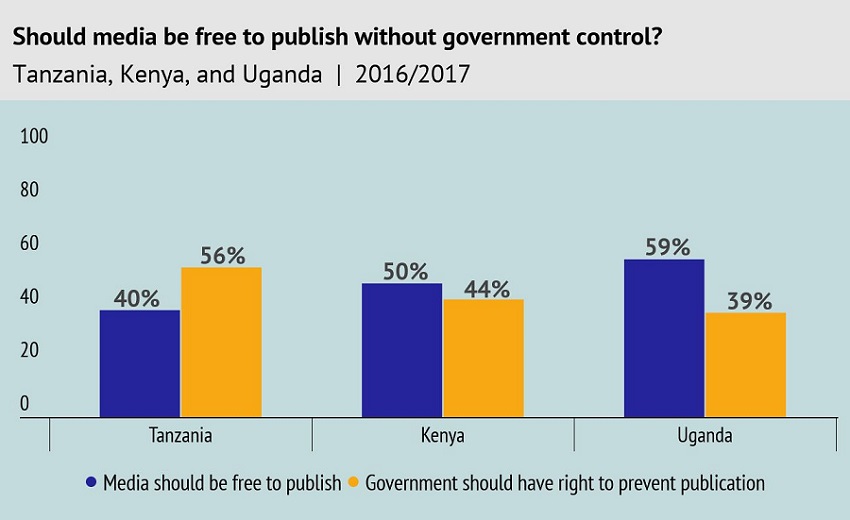

Do East Africans still want a free media?

-

Privacy Vs Free Expression: Global News Media Implications Of The EU’S General Data Protection Regulation (GDPR)

-

Ug.LandGrab: Court in Mubende tries bona fide occupants for resisting illegal evictions

MEDIA FOR CHANGE NETWORK

Uganda moves toward a Bamboo Policy to boost environmental conservation and green growth.

Published

4 months agoon

January 21, 2026

By Witness Radio team.

Uganda’s move to develop a national bamboo policy aims to boost environmental conservation and create green jobs, addressing the country’s urgent unemployment issues among the working class.

Bamboo is a critical tool in fighting climate change due to its rapid growth, high carbon sequestration capacity, and ability to produce 35% more oxygen than equivalent trees. As a fast-growing, renewable resource, it restores degraded land, provides sustainable materials that replace emission-intensive products like concrete, and offers a resilient, low-carbon bioenergy source.

Bamboo’s potential is outlined in the existing National Bamboo Strategy. Still, stakeholders stress that a formal policy involving entrepreneurs, farmers, and processors is essential to remove regulatory uncertainty and foster sector growth.

“The strategy is a good document, but it was developed largely through desk research. It did not fully involve entrepreneurs, farmers, and processors who are already working in the bamboo industry,” said Sjaak de Blois, chairman of Bamboo Uganda, encouraging stakeholders to see their role as vital.

The bamboo policy is currently at an early consultative stage, with no draft yet submitted to the cabinet or parliament. Recent consultations brought together representatives from eight government ministries, private-sector bamboo actors, and development partners to begin aligning the strategy with practical regulatory needs.

“What we have now is the starting point,” De Blois mentioned. “The next step is to take the strategy and make it more practical, more market-driven, and more Ugandan. The next step is to move from having a plan to adopting a policy.

Bamboo currently falls under several regulatory frameworks, with no single authority overseeing the sector. The policy push is being driven in part by Bamboo Uganda, a membership-based organization bringing together bamboo farmers and processors, among others. The organization aims to play a coordinating role similar to that historically played by the Uganda Coffee Development Authority in the coffee sector.

“If you want to make a sector meaningful for a country, you need coordination. Coffee became what it is because of an institution that aligned farmers, traders, exporters, and regulators. Bamboo needs the same kind of coordination.” He said.

The policy process is supported by the Belgian development agency, which is funding consultations and facilitating dialogue between the government and the private sector.

Industry players say the absence of clear regulations has constrained investment despite growing demand.

“At the moment, bamboo is everywhere and nowhere at the same time. As a farmer, you talk to forestry, as a charcoal producer, you talk to energy, as a builder, you talk to works. There is no single framework that enables the industry to function.” De Blois added.

Supporters of the policy argue that bamboo could play a significant role in environmental conservation. Bamboo grows rapidly, regenerates after harvesting, and can be harvested annually for decades, reducing pressure on natural forests.

According to Global Forest Watch (GFW), Uganda lost 1.2 million hectares of tree cover between 2001 and 2024, representing a 15% decline from the 2000 baseline. Bamboo has been identified as a key species for restoration.

“One acre of bamboo that is harvested sustainably can prevent the destruction of hundreds of acres of natural forest,” De Blois said. “If we get this right, bamboo can help reverse deforestation rather than contribute to it.”

Ms. Susan Kaikara, from the Ministry of Water and Environment, emphasized bamboo’s potential to drive Uganda’s green-growth agenda.

“Establishing a coherent national policy framework will strengthen coordination, inspire investment, and unlock bamboo’s full potential as a pillar of Uganda’s green economy,” she said.

Uganda’s charcoal market alone is estimated to be worth hundreds of millions of dollars annually, much of it supplied through unsustainable wood harvesting. Industry actors say certified bamboo charcoal plantations could offer a cleaner alternative.

“If they allow us to certify bamboo charcoal plantations, then we can get a trade license to compete or to work together with the existing market. We will reverse deforestation. We would enter an industry of about 500,000 hectares, creating smart, green jobs. We can digitalize them to make them attractive through bamboo agroforestry. So again, those things need a policy.” He adds.

Bamboo is also viewed as a climate-friendly crop due to its high capacity for carbon sequestration. Its rapid growth enables it to absorb large amounts of carbon dioxide, while its extensive root system improves soil structure and increases long-term carbon storage.

“When you look at carbon sequestration, bamboo offers several advantages. Residues from harvested bamboo can be converted into biochar, locking carbon into the soil for long periods. When you also see the sequestration per acre compared to many other trees, it is five or six times higher. So, we sequester a lot,” De Blois said

Stakeholders say that if the policy process progresses as planned, bamboo could emerge as one of Uganda’s key green growth sectors within the next decade.

“Policy making takes time. But what is important is that we have started the conversation with all the right ministries in the room. From here, it is about taking steady, practical steps.” He concluded.

Related posts:

As Uganda awaits the Energy Efficiency and Conservation law, plans to develop a five-year plan are underway.

As Uganda awaits the Energy Efficiency and Conservation law, plans to develop a five-year plan are underway.

REC25 & EXPO Ends with a call on Uganda to balance conservation and livelihood

REC25 & EXPO Ends with a call on Uganda to balance conservation and livelihood

Africa’s growth lies with smallholder farmers

Africa’s growth lies with smallholder farmers

Green Resources’ forestry projects are negatively impacting on local communities – donor

Green Resources’ forestry projects are negatively impacting on local communities – donor

WITNESS RADIO MILESTONES

A Global Report reveals that Development Banks’ Accountability Systems are failing communities.

Published

5 months agoon

December 4, 2025

By Witness Radio team.

For decades, development projects have been funded to address some of the World’s most pressing problems, including poverty, wildlife conservation, and climate change. However, what unfolds on the ground is sometimes the opposite of development. Instead of benefits, these projects have often harmed the very people they are supposed to support.

The effort to address such harm has led to the establishment of Independent Accountability Mechanisms (IAMs) by various development banks. Yet, communities affected by these projects often face betrayal by national court systems, leaving them feeling overlooked and vulnerable, emotions that underscore the urgent need for effective justice.

According to experts in development financing, since the early 1990s, development banks have sought to address and mitigate harm through IAMs—non-judicial grievance mechanisms that provide a direct avenue for impacted communities to raise concerns, engage with project implementers, and obtain remedies for the harm they have experienced.

The study, conducted by Accountability Counsel and titled Accountability in Action or Inaction? An Empirical Study of Remedy Delivery in Independent Accountability Mechanisms shows that while IAMs exist, their relevance has fallen short, underscoring the urgent need for reform to restore community trust and hope.

In compiling the report, researchers reviewed 2,270 complaints across 16 IAMs and conducted 45 interviews covering 25 cases globally.

The report reveals a persistent gap between the promise of remedies and their realization, highlighting that only 15% of closed complaints led to commitments, and just 10% achieved full completion, underscoring the urgent need for effective remedies for communities.

The findings highlight ongoing challenges, including inadequate implementation, limited monitoring, and persistent power imbalances, which continue to block communities from accessing meaningful remedies and demand immediate reform.

“The consequences of these institutional gaps are severe. As these cases show, institutional silence can exacerbate risk, while meaningful intervention can help de-escalate it.” The Report adds.

Uganda is among the countries where communities have sought justice using these accountability mechanisms. Between 2006 and 2010, communities in one of the districts of Uganda were brutally evicted by the UK-based Company, which was growing trees in the area.

The company was formerly an investee of the Agri-Vie Agribusiness Fund, a private equity fund supported by the International Finance Corporation (IFC), the private sector arm of the World Bank Group. The community filed a Complaint with the IFC’s accountability mechanism, the Compliance Advisor Ombudsman (CAO).

“We complained to this body in 2011, hoping for justice, but over 15 years later our people are still struggling, living miserably, some without homes,” a community land and environmental defender told the Witness Radio team.

According to the affected residents, the CAO process did not lead to success or meaningful compensation, as they had hoped.

Between 2013 and 2014, the communities, with support from the CAO, signed a final agreement with the Company to address the harm. Among other commitments, this included resettlement of the affected communities.

In its 28-page report published in 2015 titled: A Story of Community-Company Dispute Resolution in Uganda, the CAO wrote,” With the agreements concluded, implementation is gathering pace. As agreed, the company has begun extending development assistance to both cooperatives, and the process of restoring and enhancing livelihoods has commenced.

The first step taken by both cooperatives was to acquire land. In late 2013, the Mubende Cooperative bought 500 acres of ‘fertile agricultural land’ in the Mubende district. Their vision was to allocate a certain percentage of the land for resettlement, with the remainder utilized for farming projects.

Reports from the ground indicate that communities remain dissatisfied with the process, claiming it failed to address their concerns fully and highlighting the urgent need for more effective remedy systems.

“When you say that people are well, it is really a total lie. Many people were never compensated or resettled. Even those who got a portion of land say they have never seen a fertile land—I have never seen it, because people are living or cultivating on rocky, infertile lands,” the defender further revealed.

The struggle faced by the Ugandan community is not unique. Their experience mirrors what the Accountability Counsel report identifies worldwide. Despite registering more than 2000 complaints by communities harmed by bank-financed projects globally, there has been no comprehensive system-wide analysis of whether and how often these mechanisms deliver meaningful remedies, defined as tangible, material outcomes that repair harm and improve lives.

In addition to the slow success of such IAMs, the report notes that, across interviews covering 25 complaints, 84% referenced retaliation, violence, or threats of violence-an alarming indicator of the risks faced by communities seeking justice, demanding immediate attention and action.

“Government officials and company representatives were frequently implicated in efforts to suppress dissent. This not only reduces the likelihood of achieving a substantial remedy, but also suppresses the willingness of community members to speak honestly and openly about Complaint outcomes.” The report further adds,

Further, it reveals that communities described a range of retaliatory tactics, including physical clashes, arrests, detentions, fatalities, intimidation and harassment, death threats, and anonymous warning letters, among others.

“Remedy must be reimagined not as a peripheral concern but as a core responsibility of development institutions. It must be adequately resourced, independently monitored, and centered around the needs and voices of affected people,” the report adds.

The report recommends that development banks and IAMs establish a Remedy Framework with clear standards to ensure remedies are timely, adequate, and community-centered, and to encourage stakeholders to prioritize systemic reform for better justice outcomes.

The report also urges development banks and their accountability mechanisms to make remedies a foundational element of responsible finance. Adopting institutional frameworks that prioritize redress, empowering IAMs to oversee and enforce commitments, and incorporating the outcomes of IAM processes into project evaluations and institutional learning.

Related posts:

Banks have given almost $7tn to fossil fuel firms since Paris deal, report reveals

Banks have given almost $7tn to fossil fuel firms since Paris deal, report reveals

Opinion: USAID needs an independent accountability office to improve development outcomes

Opinion: USAID needs an independent accountability office to improve development outcomes

Communities Under Siege: New Report Reveals World Bank Failures in Safeguard Compliance and Human Rights Oversight in Tanzania

Communities Under Siege: New Report Reveals World Bank Failures in Safeguard Compliance and Human Rights Oversight in Tanzania

Oxfam Report 2011: When Indigenous communities named Rwanda nationals by investor to run away from corporate accountability

Oxfam Report 2011: When Indigenous communities named Rwanda nationals by investor to run away from corporate accountability

MEDIA FOR CHANGE NETWORK

Young activists fight to be heard as officials push forward on devastating project: ‘It is corporate greed’

Published

8 months agoon

August 27, 2025

“We refuse to inherit a damaged planet and devastated communities.”

Youth climate activists in Uganda protesting the East African Crude Oil Pipeline, or EACOP, are frustrated with the government’s response to their demonstration as the years-long project moves forward.

According to the country’s Daily Monitor, youth activists organized with End Fossil Occupy Uganda took to the streets of Kampala in early August to protest EACOP. The pipeline, under construction since about 2017 and now 62 percent complete, is set to transport crude oil from Uganda’s Tilenga and Kingfisher fields through Tanzania to the Indian Ocean port of Tanga by 2026.

Activists noted the devastating toll, with group spokesperson Felix Musinguzi saying that already around 13,000 people “have lost their land with unfair compensation” and estimating that around 90,000 more in Uganda and Tanzania could be affected. End Fossil Occupy Uganda has also warned of risks to vital water sources, including Lake Victoria, which it says 40 million people rely on.

The group has been calling on financial institutions to withdraw funding for the project. Following a demonstration at Stanbic Bank earlier in the month, 12 activists were arrested, according to the Daily Monitor.

Some protesters were seen holding signs reading “Every loan to big oil is a debt to our children” and “It’s not economic development; it is corporate greed.”

Meanwhile, the regional newspaper says the government has described the activist efforts as driven by foreign actors who mean to subvert economic progress.

EACOP’s site notes that its shareholders include French multinational TotalEnergies — owning 62 percent of the company’s shares — Uganda National Oil Company, Tanzania Petroleum Development Corporation, and China National Offshore Oil Corporation.

The wave of young people taking action against EACOP could be seen as a sign of growing public frustration over infrastructural projects that promise economic gain while bringing harm to local communities and ecosystems. Activists say residents face costly threats from pipeline development, such as forced displacement and the loss of livelihoods.

Environmental hazards to Lake Victoria could also disrupt water supplies and food systems, bringing the potential for both financial and health impacts. Just 10 years ago, an oil spill in Kenya caused a humanitarian crisis. The Kenya Pipeline Company reportedly attributed the spill to pipeline corrosion, which led to contamination of the Thange River and severe illness.

The EACOP project has already locked the region into close to a decade of development, and concerns about the pipeline and continued investments in carbon-intensive systems go back just as long. Youth activists, as well as concerned citizens of all ages, say efforts to move toward climate resilience can’t wait. “As young people, we refuse to inherit a damaged planet and devastated communities,” Musinguzi said, per the Monitor.

Source: The Cool Down

Related posts:

Put people above profits – Climate Activists urge Total to defund EACOP

Put people above profits – Climate Activists urge Total to defund EACOP

EACOP: The number of activists arrested for opposing the project is already soaring in just a few months of 2025

EACOP: The number of activists arrested for opposing the project is already soaring in just a few months of 2025

EACOP activism under Siege: Activists are reportedly criminalized for opposing oil pipeline project in Uganda.

EACOP activism under Siege: Activists are reportedly criminalized for opposing oil pipeline project in Uganda.

The East African Court of Justice fixes the ruling date for a petition challenging the EACOP project.

The East African Court of Justice fixes the ruling date for a petition challenging the EACOP project.

More than 500 Masindi residents live in fear as a tycoon targets their land.

Kiryandongo farmer accuses minister of grabbing 100-acre land

“We are facing increased violent land dispossessions and climate injustices” – African women.

Kassanda businessmen accused of a second attempt to grab an 86-year-old farmer’s land despite court orders.

Breaking: Ugandan Court jails eight Anti-EACOP activists as crackdown on dissent deepens.

African women are rising for climate justice and reparations on the inaugural continental day of action.

Ugandan Farmers Sue EACOP in London in Last Minute Effort to Stop Crude Oil Pipeline

Agroecological farming: EAC Bill moves to Parliament to establish a regional legal framework to protect and promote sustainable farming and food systems.

Innovative Finance from Canada projects positive impact on local communities.

Over 5000 Indigenous Communities evicted in Kiryandongo District

Petition To Land Inquiry Commission Over Human Rights In Kiryandongo District

Invisible victims of Uganda Land Grabs

Resource Center

- Land And Environment Rights In Uganda Experiences From Karamoja And Mid Western Sub Regions

- REPARATORY AND CLIMATE JUSTICE MUST BE AT THE CORE OF COP30, SAY GLOBAL LEADERS AND MOVEMENTS

- LAND GRABS AT GUNPOINT REPORT IN KIRYANDONGO DISTRICT

- THOSE OIL LIARS! THEY DESTROYED MY BUSINESS!

- RESEARCH BRIEF -TOURISM POTENTIAL OF GREATER MASAKA -MARCH 2025

- The Mouila Declaration of the Informal Alliance against the Expansion of Industrial Monocultures

- FORCED LAND EVICTIONS IN UGANDA TRENDS RIGHTS OF DEFENDERS IMPACT AND CALL FOR ACTION

- 12 KEY DEMANDS FROM CSOS TO WORLD LEADERS AT THE OPENING OF COP16 IN SAUDI ARABIA

Legal Framework

READ BY CATEGORY

Newsletter

Trending

-

MEDIA FOR CHANGE NETWORK2 weeks ago

MEDIA FOR CHANGE NETWORK2 weeks agoAfrican women push for reparations and environmental accountability after landmark Climate Justice Day.

-

MEDIA FOR CHANGE NETWORK1 week ago

MEDIA FOR CHANGE NETWORK1 week agoEast African women unite and meet in Nairobi to develop strategies to protect communal tenure systems and collectively resist false climate solutions.

-

MEDIA FOR CHANGE NETWORK2 weeks ago

MEDIA FOR CHANGE NETWORK2 weeks agoNigerian Banks under fire over ESG failures as a new report exposes Weak Climate and Human Rights Compliance.

-

MEDIA FOR CHANGE NETWORK2 days ago

MEDIA FOR CHANGE NETWORK2 days ago“We are facing increased violent land dispossessions and climate injustices” – African women.

-

NGO WORK2 weeks ago

NGO WORK2 weeks agoUN Experts Put Tanzanian Government on Notice – “Ensure Transparency and Respect for Indigenous Peoples’ Rights in Ngorongoro”

-

MEDIA FOR CHANGE NETWORK2 weeks ago

MEDIA FOR CHANGE NETWORK2 weeks agoMaasai protest evictions from Ngorongoro as UN experts warn conservation must respect rights

-

MEDIA FOR CHANGE NETWORK2 weeks ago

MEDIA FOR CHANGE NETWORK2 weeks agoEnvironmentalists Call for Stronger Enforcement as Wetland and Forest Destruction Accelerates

-

MEDIA FOR CHANGE NETWORK2 days ago

MEDIA FOR CHANGE NETWORK2 days agoKassanda businessmen accused of a second attempt to grab an 86-year-old farmer’s land despite court orders.